The limits of language

May 07, 2026

If you’ve worked a cash register, congrats! You possess one of the fundamental skills required to design for human-AI interactions: quickly interpreting and fulfilling vague (and sometimes contradictory) requests. Most of the time you just need to bridge the gap between their knowledge and yours, like one lady who struck fear into my heart when she said she had a crazy idea.

“Is it possible to get a latte but without the milk? Like maybe hot water instead? I know it might be weird,” she asked sheepishly, and I think I laughed out of relief.

“That’s not weird at all. I actually have great news — that drink is super common, it’s called an Americano. I’ll get that started for you now.”

Other times, you really need to rub those brain cells together.

Unlike most of our customers, the man who walked into the coffee shop on this particular spring day was not one of the regulars who lived in one of the nearby neighborhoods.

"I'll have a large coffee with four shots of cappuccino,” he ordered in the terse, offhanded tone that customers tend to use when they’re in a rush.

“Sorry, could you repeat that?” I hoped I had misheard him.

“A large coffee with four shots of cappuccino,” he repeated pointedly. Unfortunately, my hearing was fine.

“Just to clarify, are you looking for five drinks, a large coffee and four cappuccinos? Or did you want a large shot in the dark, so a drip coffee with four shots of espresso in it?” This was not your standard order deciphering task. If you aren't familiar with coffee, all you need to know is that a cappuccino is a drink, not an ingredient — not really the kind of thing you can just add onto another drink.

“Just give me a large coffee with four shots of cappuccino." This time, he overenunciated in the way people do when they think you’re stupid.

I rang him up for a large quad shot in the dark, scribbling his actual request on the ticket before handing it to my coworker.

“Thanks, we’ll have your drink ready on the bar in a minute.” My coworker read the ticket, then joined me behind the pastry case (difficult to hear through) to discuss the bizarre order.

”Does he want a quad shot in the dark?”

”No idea, he wouldn’t explain.”

“A drip and four cappuccinos?”

“I asked about that too.”

My coworker stared at me. I shrugged.

“I’m going to make him a quad shot in the dark au lait,” he declared, standing up fully now that we didn’t need to talk quietly.

When the customer picked up his drink, he stared at it for a moment. He looked confused and in a way, it's kind of beautiful that we were all equally baffled by his order.

“Your large coffee with four shots of cappuccino,” my coworker chirped, throwing him a smile.

Customers often lack the vocabulary to describe what they want, so bridging those gaps is part of the job. Neither four shots of cappuccino guy nor americano lady understood what could be done, but the customer who gave the best description got the right drink. I'm sure that what the four shots of cappuccino guy actually wanted was quite doable. But in that moment, the order was unfulfillable because the customer didn’t understand what he wanted and expected me to be able to interpret his request without additional guidance.

And you know what? I get it. Having to repeat yourself sucks. Explaining something that feels obvious is a drag. When Claude asks me too many followup questions, I smash “skip” and get annoyed when it doesn’t do what I wanted it to. Nobody likes to feel pestered, but it feels like AI tools are becoming "needier" as they ask for more input from us.

When we started building Gamma's first AI generator in early 2023, most products on the market took a "sky's the limit" approach. They wanted you to know that anything was possible and a lot of them looked kind of like this:

We actually designed a screen like this too. But when I thought about how many people struggle to pin down a coffee order, I became more and more skeptical of big blank box.

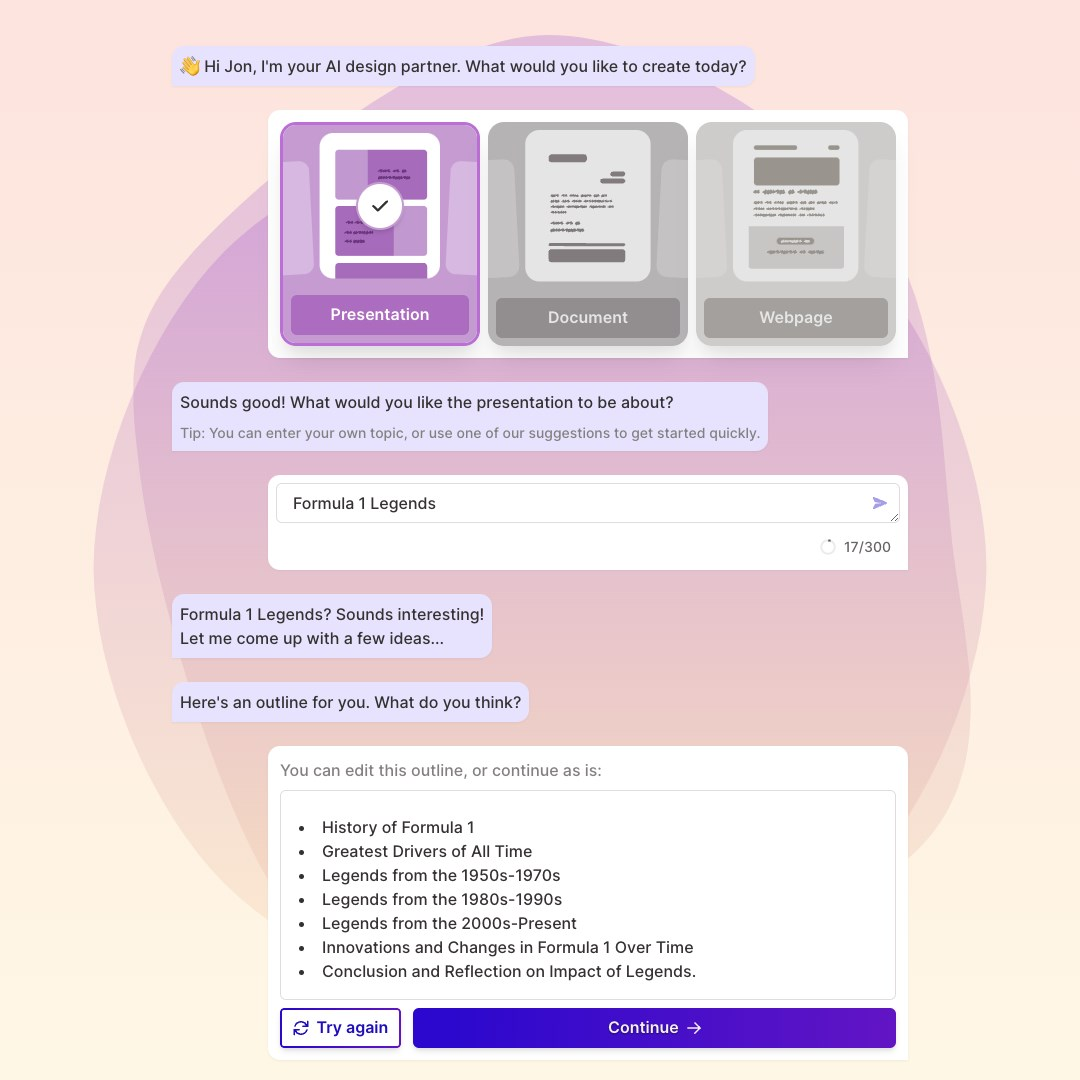

As part of an AI Hack Day, I worked with Jordan to prototype a flow that asked what you wanted to make, let you enter a topic or pick an example, and showed you a short, editable outline to make sure that you and Gamma were on the same page before you hit generate and spent your credits (our money).

AI images were pretty bonan, so we excluded them from the generator, not wanting the weird limbs and appendages to distract people or scare them away. I wrote all of the example prompts, optimizing for topics that gave you pretty web search images like fabulous frogs, coral reef ecology, and San Francisco architecture.

We didn't have the luxury of showing people that the sky was the limit — we had to get them to make something that looked good as fast as possible. There would be no second chance.

Seven-ish weeks and one SVB collapse later, we launched. It’s been a blur ever since.

There was no single magic bullet that made Gamma thrive where others flailed. But I think we were able to make better decisions faster because we assumed that: a) our users knew very little about prompting and b) they were not going to learn how to prompt engineer just to get a better result from Gamma. Imitation can be a sign that you're doing something right, and within months every AI presentation product had added an outline step to their generator, moving away from the big blank box.

Nowadays, chat is the standard interface for AI and it’s easy to see why. It's approachable, with little to no learning curve. You can inject brand personality into it. The requests can reveal new use cases, product gaps, and feedback sources. (On the flip side, if there's an input box people will try to enter a prompt into it no matter what it's for ¯_(ツ)_/¯)

Chat also expands the surface area for possibility and failure simultaneously. Prompts matter, so a user’s ability to explain and clarify their thoughts is more important than ever. When things go awry, was it because the tool is incapable of fulfilling the request or did the user lack the language to give the tool adequate directions?

In the age of AI, surfacing features is just one side of the discoverability coin; the other is how well the user writing the prompt understands what they're asking and how to ask it.

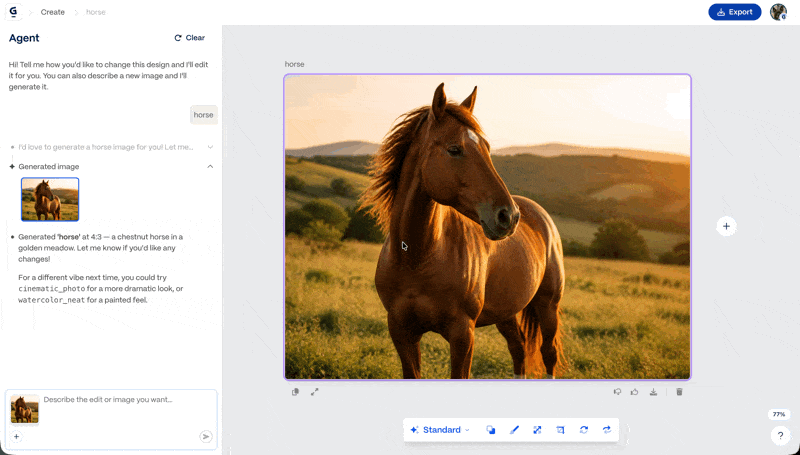

I ran headlong into these speed bumps a few months ago while making a presentation. I asked our new image generation product, Gamma Imagine, to take a bee character I'd created and "make [it] drive a convertible". What I got was cute, but it wasn't what I had envisioned.

"Face the other way" flipped the image horizontally or made the bee turn its head. "Make it more cinematic" just changed the lighting. Taking a step back, I thought about composition and how I would approach this as a photograph...

make an image of this bee driving a convertible away from the camera into a sunset, wearing sunglasses, face visible in rearview

...and I got exactly what I wanted! Possibility was not the problem. But how does someone without a background in design or photography accomplish the same thing?

Time to think outside the (input) box — when language fails, people need other tools to achieve their goals.

Video games have been solving this type of problem for decades. I've loved The Sims for most of my life and even if I've never enjoyed making sims, I appreciate the level of detail and control that Create A Sim gives you. Want to see what your sim looks like from the side? Easy, click/drag to rotate around or use the keyboard shortcuts (or those giant arrows). Why couldn't something like this make it easier for users to change the perspective of an image?

Well, it can! One Devin session later and I had a working prototype. Rotate the cylinder, hit "Regenerate", and marvel at your image from a new angle:

Pushing the boundaries of what's possible isn't always a product, a business, or even launchworthy. Sometimes it's a little tool to bridge the gap between what is possible and what users have the words to describe. When every day brings a new announcement, it can be hard to find your footing. But about one thing I feel very certain: the more bridges we build, the more people will imagine ideas and futures that they never thought were possible for them to create before.

And then what?

Who knows. Let's find out.